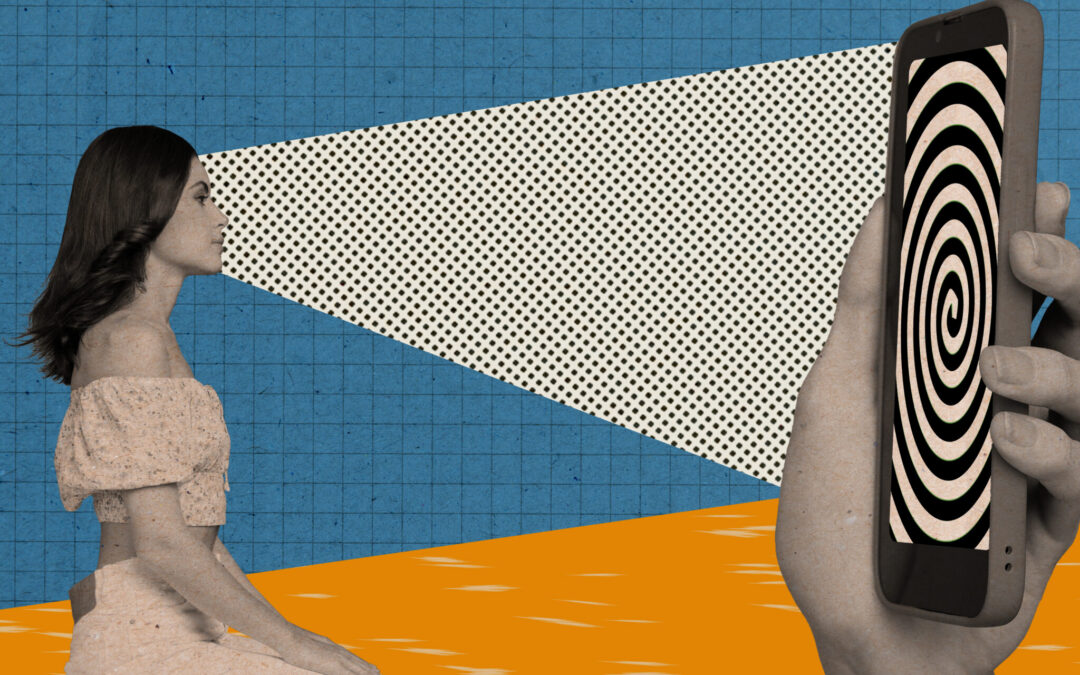

That moment when your phone serves up a coupon right as you walk past a store — that’s not coincidence. It’s geofencing: your location data triggering a behavioral nudge timed to maximize your chance of walking through the door.

According to a growing body of research from military analysts and national security institutions, the commercial infrastructure doing that job is functionally identical to what governments are now using to shape public opinion about war.

Location Data as a Behavioral Blueprint

When a marketing platform knows where you are and pairs that with thousands of data points about what you buy, read, share, and scroll past, it can build a surprisingly accurate model of what you’re likely to believe. A Fall 2025 analysis published in the US Army’s Gray Space journal — written by a West Point-trained Cyber Operations officer — lays out the mechanism directly: malign influence actors adopt the exact same surveillance capitalism framework that commercial advertisers use to profile individuals, categorizing users into behavioral clusters and predicting their response to new content.

The profiling doesn’t just capture what you buy. Research shows that consumer behavior reliably reveals political behavior — meaning the data pipeline that decides which ad reaches your phone is the same one a state-backed operation uses to decide which version of a conflict narrative to deliver to which population.

Thousands of Narratives, Tested in Real Time

Cold War propaganda was broadcast: one message, mass audience, blunt instrument. Modern influence operations work more like A/B testing. Strategists deploy thousands of narrative variations simultaneously, monitor which framings generate engagement from which demographic segments, and refine their approach in near-real time. Generative AI has collapsed the cost of this dramatically — content that once required human writers and translators can now be created and delivered at machine speeds, customized down to the individual user.

The goal isn’t to convince everyone. The Atlantic Council’s Digital Forensic Research Lab, in its 2026 geopolitics outlook, describes operations increasingly designed to be continuous rather than episodic — blending AI-generated content with commercial infrastructure in ways that are harder to detect and attribute precisely because they look indistinguishable from normal advertising.

Geofencing Churches, Targeting Worshippers

The leap from theory to practice is already documented. Foreign Agent Registration Act filings revealed a campaign — funded by a foreign government ministry — that proposed geofencing churches across four US states during worship hours and delivering targeted political messaging to congregants’ phones. The pitch described it as the largest Christian church geofencing operation in US history.

The technology enabling that proposal is commercially available marketing infrastructure. No novel espionage required.

Meanwhile, Vanderbilt researchers analyzing leaked documents from Chinese firm GoLaxy found an AI influence system running influence campaigns against civilian populations at a precision and scale that previously required significant human intelligence resources. National security experts described the findings as potentially redefining information warfare.

When Real Content, Perfectly Timed, Becomes the Weapon

The subtler risk isn’t fake content — it’s real content, delivered to you at the moment and in the framing most likely to shift your opinion, so precisely calibrated to your behavioral profile that you have no particular reason to notice it’s happening. The Army analysis argues this presents a challenge that conventional countermeasures aren’t equipped to handle: if every user receives uniquely generated content, the cross-referencing tools typically used to identify influence campaigns stop working.

The Atlantic Council’s DFRLab puts it plainly — this is disinformation that happens where analysts can’t see it, inside deployed AI systems that can’t be audited from the outside.

What Consumer Privacy Has to Do With Any of This

The Army officer’s proposed solution isn’t more surveillance or censorship — it’s stronger consumer privacy law. The argument: because influence operations run on the same data brokers and behavioral profiling systems as commercial advertising, limiting what those systems can collect degrades the targeting infrastructure available to malign actors as a side effect. The EU’s GDPR, which gives citizens the right to sue companies for unauthorized data collection, is held up as a model worth studying.

The US has no equivalent federal privacy law. Every data point a retailer collects about your habits is, in principle, available to the same profiling pipeline used in influence operations.

Noticing the Nudge

Understanding that the coupon and the war narrative are products of the same system doesn’t neutralize the system — but it does change what critical thinking about your own opinions actually requires. The phone that knows you’re near a shoe store also knows a great deal about what you’re likely to believe about a conflict on the other side of the world. of the same system doesn’t neutralize the system — but it does change what critical thinking about your own opinions actually requires.

The phone that knows you’re near a shoe store also knows a great deal about what you’re likely to believe about a conflict on the other side of the world.